6. Summary & Key Takeaways of the course

Over the last weeks, multiple topics have been covered in this course, and below are the 10 key takeaways:

Sustainable IT Monitoring solutions become an operational necessity for all companies managing a large IT infrastructure and a commercial necessity for IT and Cloud service providers

The electricity consumption of the IT sector is significant today and is set to grow tremendously. In the US, the expansion of data centres is one of the main drivers of electricity demand growth and the electricity demand of data centers is expected to exceed heavy industries in parts of the world by 2030.

At a time when regulations are strengthening, this urges companies to more closely monitor their IT emissions, and IT services providers to provide more transparency to their customers. As FinOps and GreenOps are complementary, Sustainable IT Monitoring solutions are a way to make operations more efficient and sustainable, and leverage both financial and sustainability benefits.

Leading IT companies today are already implementing these solutions with the help of Electricity Maps Enterprise API.

Electricity is a core component of IT carbon footprint and cost, and electricity grid signals are key when developing Sustainable IT Monitoring solutions

There are a multitude of signals that can be followed about the electricity grid: load, electricity mix, electricity prices, percentage of renewables or carbon-free energy source, carbon intensity… All these signals bring different insights depending on the time perspective considered. Historical data enables trend analysis between years, accurate carbon accounting of IT emissions, and dashboards of monthly activity with cost and emissions. Real-time data helps unlock more insights on how to better manage IT workloads and raise awareness among teams. Forecasts over multiple days empower advanced and automated decision-making that optimizes usage, increases efficiency, and reduces costs and emissions.

Because electricity grids are highly dynamic and complex systems, data granularity is crucial

Because electricity demand and electricity generation, that get connected by the electricity grid, keep changing. A multitude of parameters are fluctuating every second on the electricity grid, and each grid has its very own specificities, even when located in the same region or country. This means spatial and temporal granularity are crucial when building solutions.

(Gif showcasing how the carbon intensity varies depending on time granularity)

(Gif showcasing how the carbon intensity varies depending on geographic granularity)

Insights increase with granularity. Moving from a yearly to an hourly granularity improves accuracy by 20% on average worldwide, and by more than 40% in several grids

When sourcing grid signals, make sure they capture electricity flows, stay compliant with regulations, and are verifiable

Besides granularity, capturing grid flows is important to ensure the most accurate grid signals. Electricity exchanges between grids, imports or exports, account for a significant share of the electricity available on the grid. To ensure your sustainable IT monitoring solutions can be used as part of emissions reporting obligations and help you or your clients reach emissions reduction targets, signals used must respect the main regulations and standards. Ultimately, your solutions might require an audit, and using verifiable signals is a must-have in this context.

Substantial challenges arise when sourcing grid data, and products like Electricity Maps Enterprise API solve them for your team

This data can be sourced from grid operators or government agencies in each part of the world. Some data sources, such as the IEA and Ember, also provide data on a global scale, but not with enough time granularity to accurately measure IT emissions and derive actionable insights.

Sourcing data from these sources brings several challenges about data availability, quality, ingestion, and the additional transformations required, such as flow-tracing or the use of emissions factors to calculate carbon intensity data. Data providers such as Electricity Maps or Singularity directly provide this information.

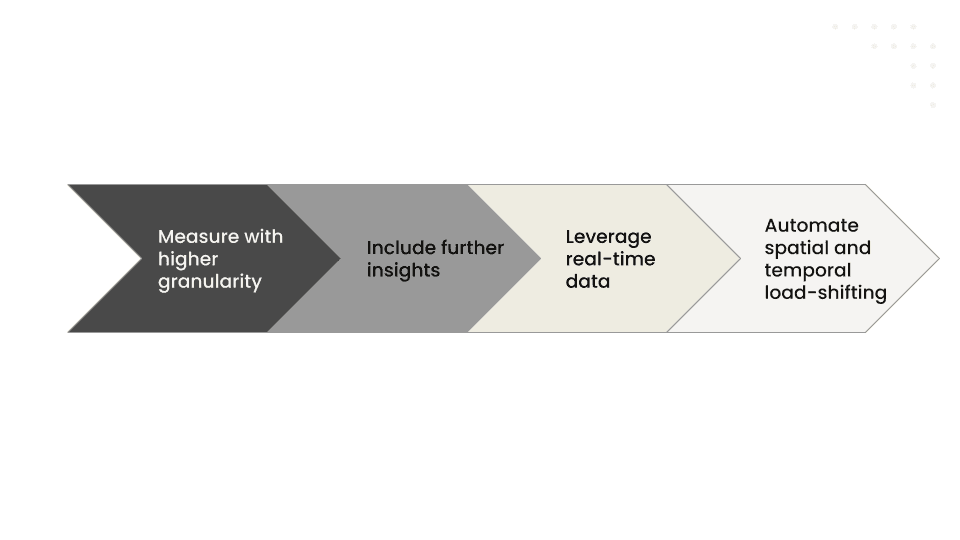

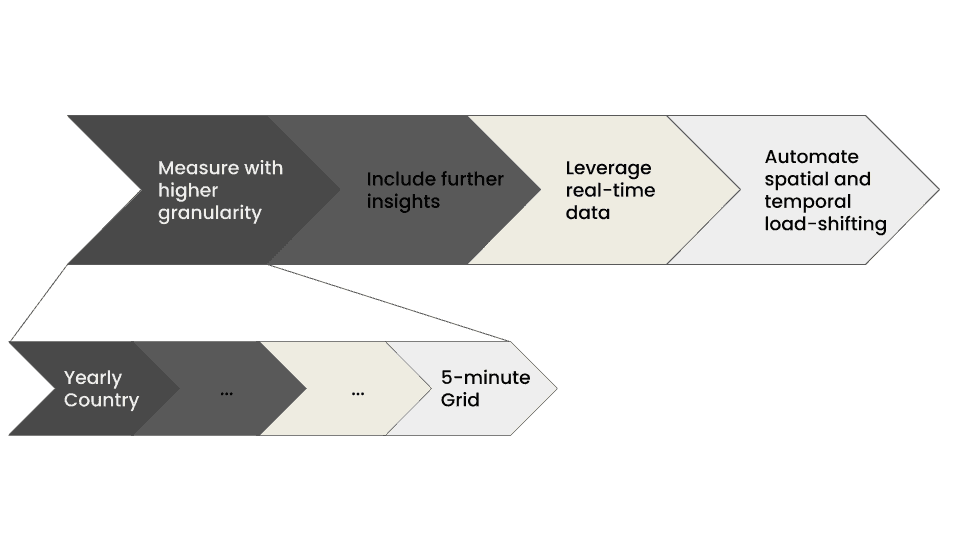

Building sustainable IT Monitoring solutions is a journey that can be divided into four steps:

Step 1 - Gain visibility by increasing granularity in your IT emissions measurement

The first goal of sustainable IT solutions is to increase visibility into IT energy use and emissions. IT is one of the fastest-growing sources of corporate emissions and costs. However, most organizations don’t have the visibility they need. This represents the first step toward compliance, transparency, and long-term reduction. It is a necessary step to unlock insights into cost and emissions reduction and build customers' trust. Higher granularity means improved insights.

Step 2 - Get smarter about your electricity consumption by including more grid signals in your monitoring

Many signals can be sourced about the electricity grids, beyond the flow-traced carbon intensity. Renewable or carbon-free energy percentage data enables tracking of renewables’ matching goals. Electricity prices data enables cost reductions along emissions savings. Electricity mix data provides more explainability and informs best practices.

Step 3 - Start optimizing by leveraging real-time data

After gaining more visibility and getting smarter about IT electricity usage, it's time to reduce emissions and costs. While historical data enables identifying opportunities and best practices, real-time data is necessary given the highly dynamic nature of electricity grids. With real-time data, your team and customers unlock better decision-making to consume cleaner and cheaper electricity. Spatial flexibility is better leveraged with a real-time view of the grid that allows moving workloads to where electricity prices and carbon intensity are the lowest.

Step 4 - Maximize emissions and cost savings by implementing automated spatial and temporal load-shifting

Sustainable IT solutions should not stop with better monitoring of IT electricity usage. The end goal is to reduce cost and emissions, and this is what automated load-shifting delivers. Each workload has specific requirements and degrees of spatial and temporal flexibility. Automations combined with multiple days-ahead forecasts of electricity signals ensure requirements are met while all flexibility is used to its full potential for emissions and cost reduction.

That's it! We are now at the end of our course.

We will send an email later this week, asking you for your feedback. As we've disclosed from the beginning, this is the first time we produce educational content for beginner practitioners. We've thoroughly enjoyed producing this content for you all, and we're thankful for all of you that followed along, and provided feedback along the way.

We want to keep building on this material, or repackage it according to your feedback. If you've found some value in this course, please keep your eye on your email inbox, and take 5 minutes of your time to answer our survey.

Thank you all,

The Electricity Maps team

5. Deep dive into leveraging real-time and forecasted data for flexibility

Recap of previous lessons

In the first two lessons, we introduced the concept of Sustainable IT Monitoring, some basics of the electricity grids, and relevant grid signals. We also discussed key considerations when selecting them and challenges associated with sourcing them. In the third lesson, we introduced the Sustainable IT Monitoring journey and conducted a deep dive on the first two steps of the journey in the last lesson: measuring IT emissions with higher granularity and adding further insights leveraging more grid signals.

Access last week’s lesson here for more details on the first two steps of a Sustainable IT Monitoring journey. Today, we will conduct a deep dive into the next and last two steps of the journey: leveraging real-time data and implementing automated load-shifting.

Next week, the last lesson will wrap up the course with a summary of the different topics addressed and the key takeaways.

Once again, we value all feedback received so far and encourage you to keep submitting your opinion on this course and the different lessons.

Please also submit any questions you may have so that we can address them as part of the final lesson next week. This week's feedback form also includes a field where you can ask questions for our QA section next week: 1 minute survey

In Today’s lesson, we'll look at optimizing our IT loads using various dimensions and signals.

Start optimizing your consumption with real-time data

The Sustainable IT Monitoring journey naturally starts with historical reports and dashboards presenting end-of-month statistics. This is already very valuable information for companies with a large IT footprint, or that service other companies. Data centers can build reports for their customers, for example, or a large enterprise can map its own IT infrastructure, and where and when their emissions come from.

If these represent a first step in the journey, enabling a better understanding of the current situation and identifying where opportunities lie as well as where management is most needed, moving closer to real-time represents a necessary third step in this maturity journey.

As highlighted during the previous lessons of this course, the state of the grid is very dynamic; it changes in space and time. This means that while historical data can be very useful for deriving best practices, such as favoring IT loads in the middle of the day in California and IT loads in the middle of the night in Ontario, moving to real-time data is a step needed to leverage the emissions and cost reduction potential of IT workloads.

Spatial flexibility

In the examples below, we go through Spatial load-shifting. Not every company will be able to do this, but two big categories can:

1. Companies that have a lot of cloud compute, hyperscalers, and data centers themselves, as well as their customers with large cloud infrastructures

2. Multi-location enterprises that have distributed owned IT infrastructure. Industries like Telecom, Banking, Pharma, and sometimes even heavy industry and manufacturing.

Optimising for emissions

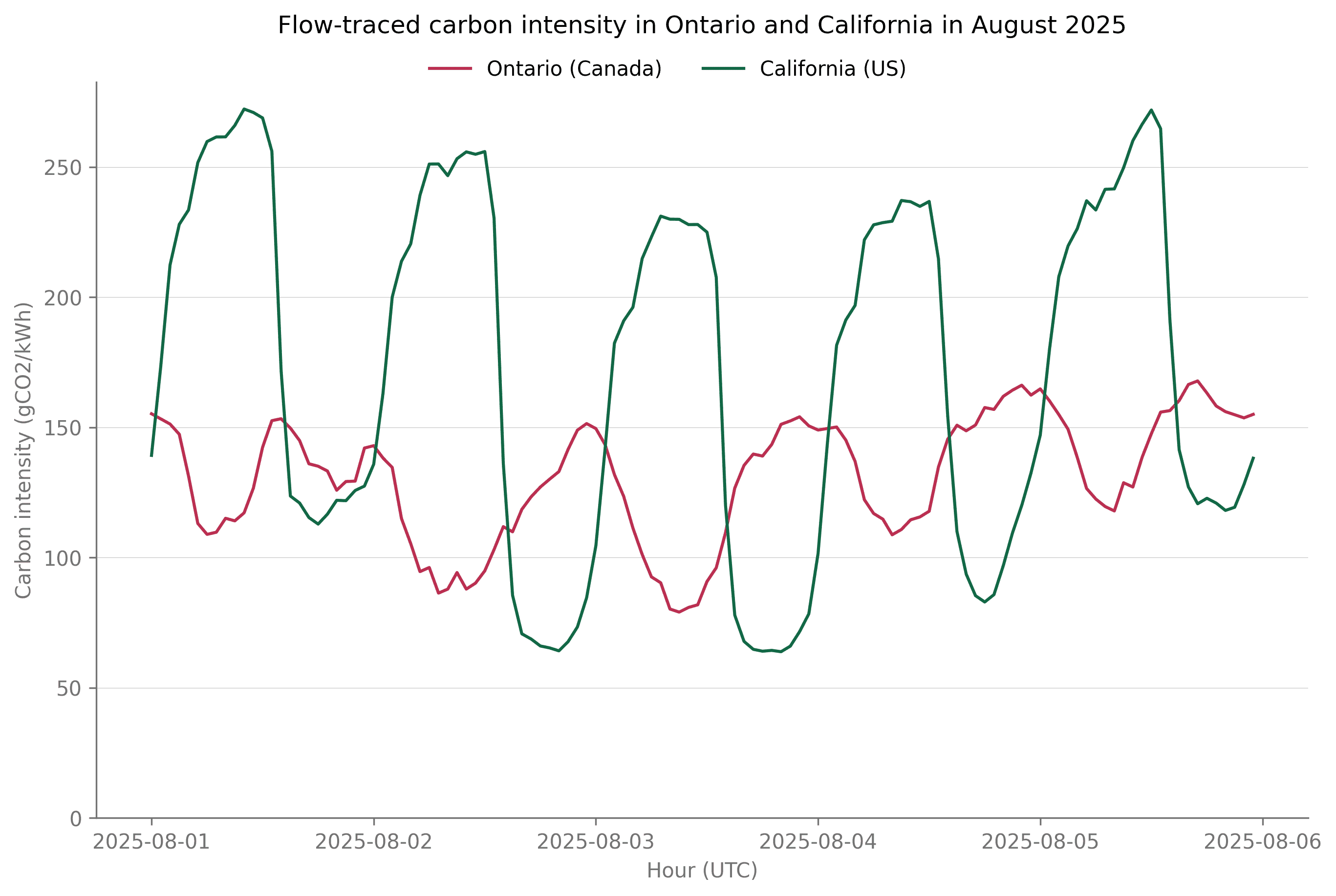

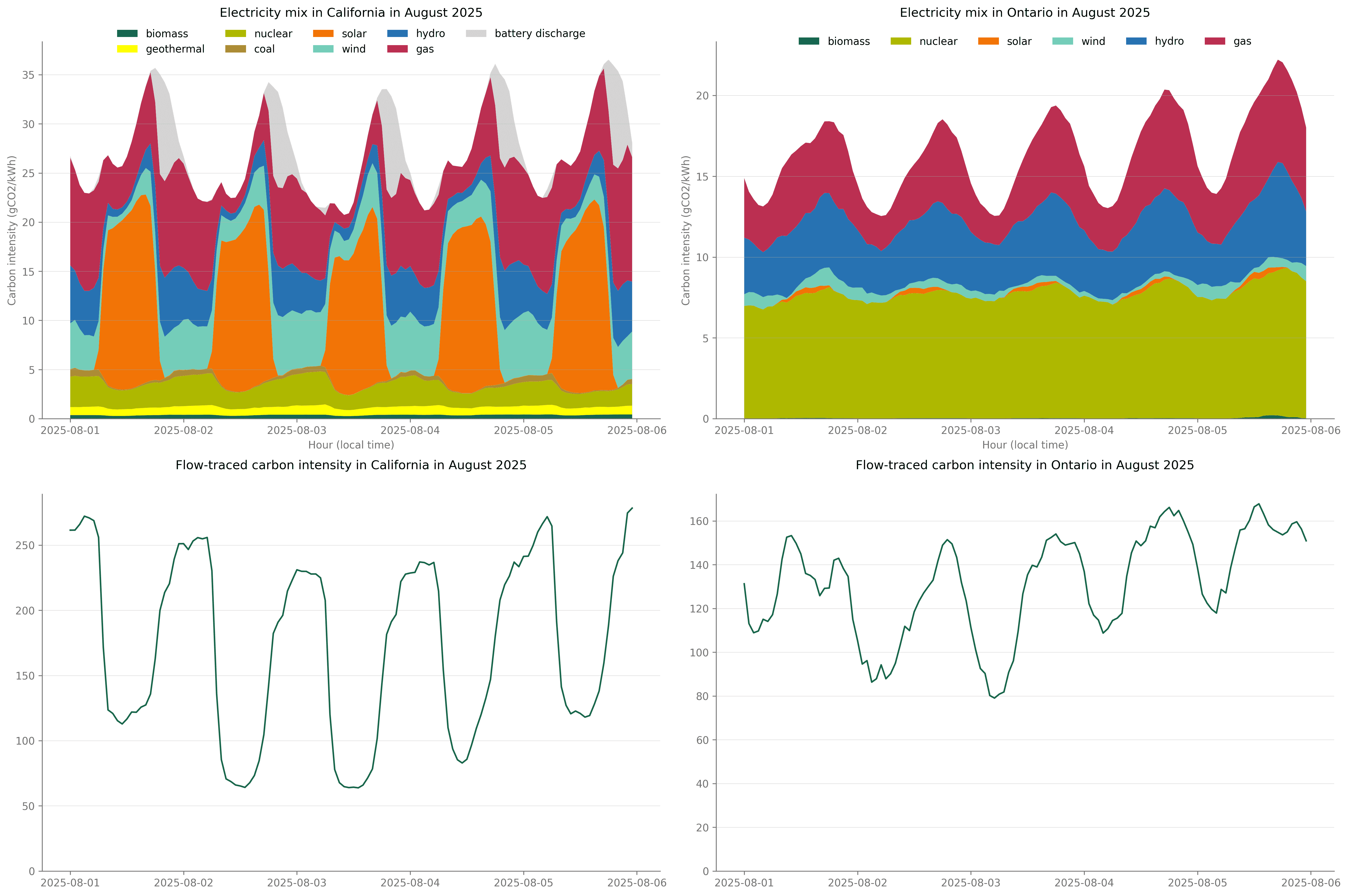

Let’s take the example of us needing to run a workload on a data center. In this example, we have the choice between two data centers, one located in Ontario and one located in California. With historical data only, we would choose to run our workloads in Ontario, where the grid carbon intensity in August (134 gCO2/kWh) was almost half the one in California (242 gCO2/kWh). However, using real-time data would lead to more informed decisions. The cleanest grid actually depends on the time of the day since the two grids have completely different carbon intensity variations, as can be seen in the image below:

(Graph plotting Ontario and California's carbon intensity. Note how the curves are almost opposed in direction, leading to the fact that, depending on time of day, the location of lowest carbon intensity changes.)

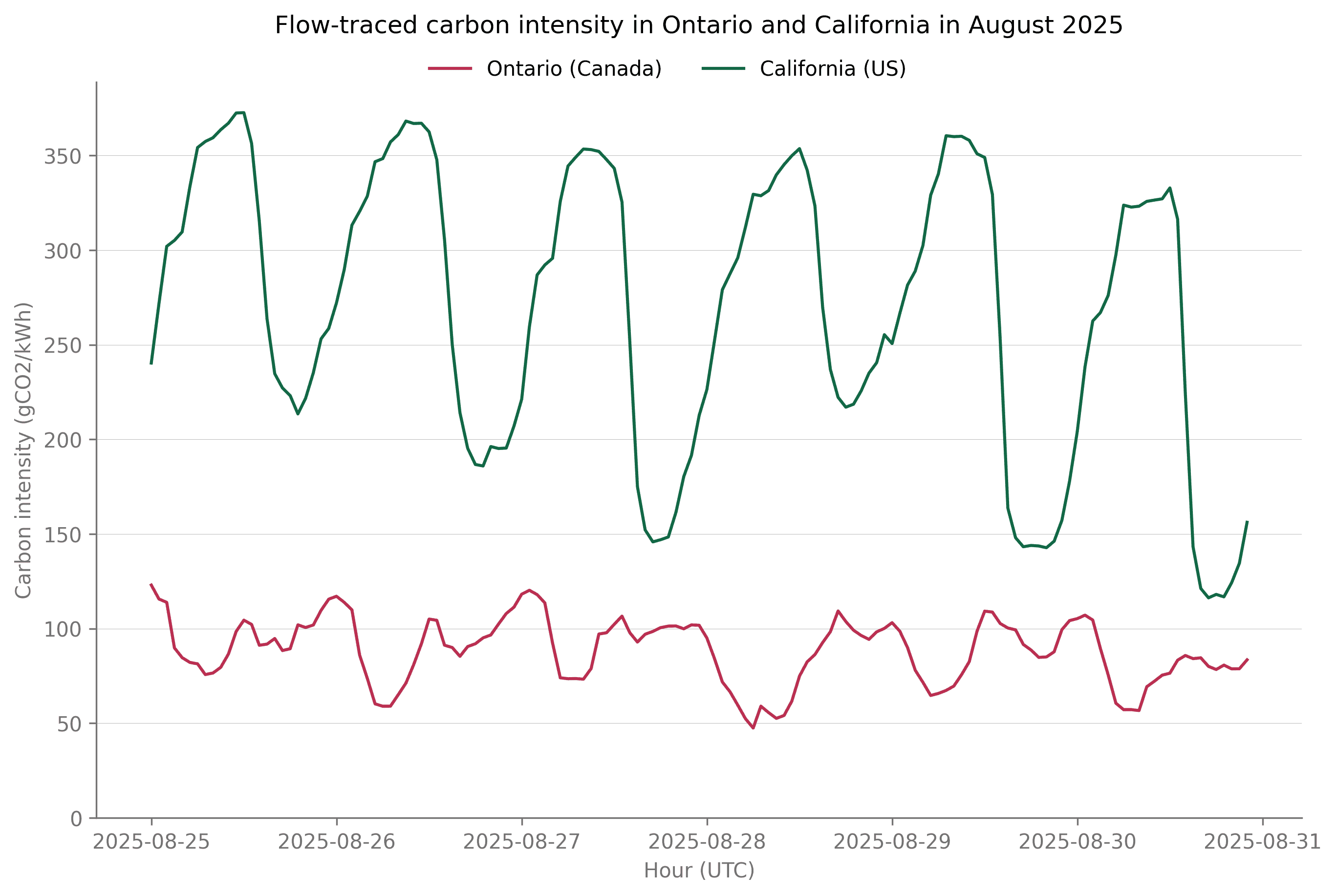

It even depends on the week itself, as during some weeks, carbon intensity can remain higher in California even when solar generation is high. At the end of August, as wind generation was low and demand on the grid was high, the grid carbon intensity in California remained above the grid carbon intensity in Ontario for several consecutive days.

(A graph showing Ontario and California's carbon intensities, where Ontario's is significantly lower for the entire time)

We highlighted in previous lessons how historical data could already enable identifying best practices and recommendations to lower costs and emissions of IT. The above example shows the limitations of this approach, as a consequence of the very dynamic nature of the electricity grid.

Real-time data unlocks better decision-making and empowers your team and customers to more accurately identify the best locations to leverage cleaner and cheaper electricity. It enables live decisions that leverage spatial flexibility as best as possible. If we have a real-time view of the electricity grid, we can choose to run our workload on the grid with the lowest current carbon intensity.

What about the cost?

For a lot of companies, electricity represents a non-trivial portion of their costs. These companies tend to want to optimize for electricity costs (as well).

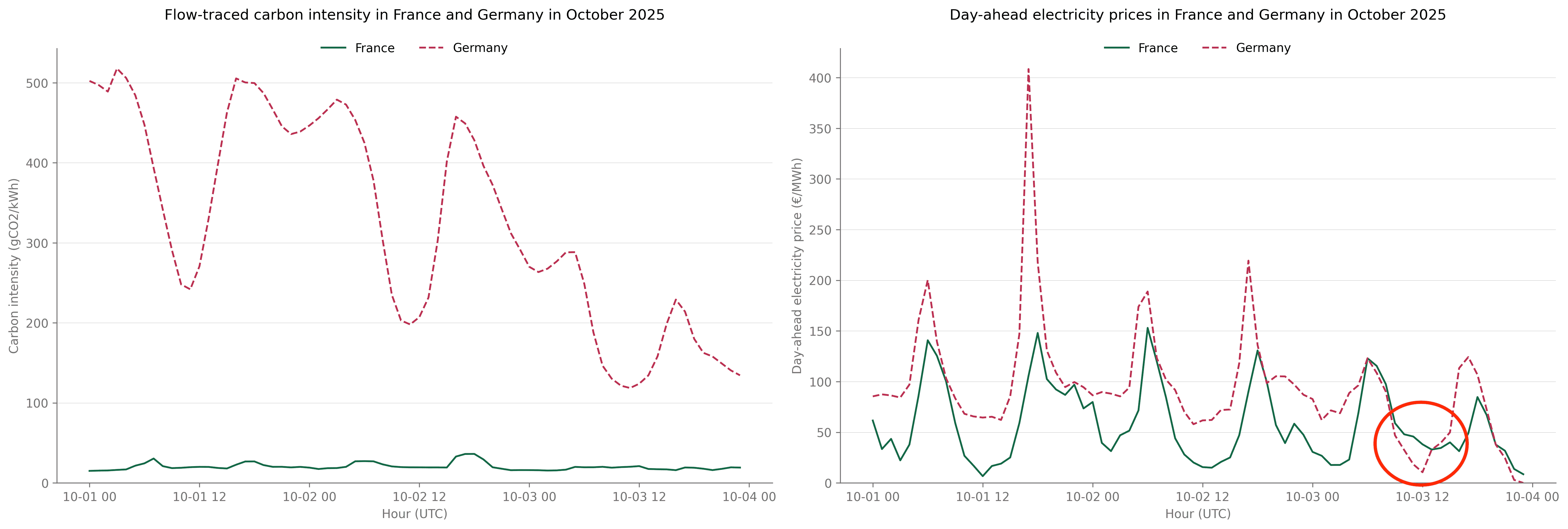

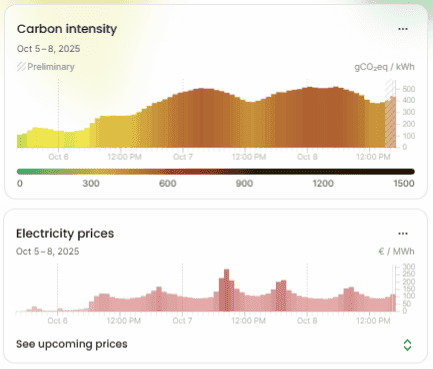

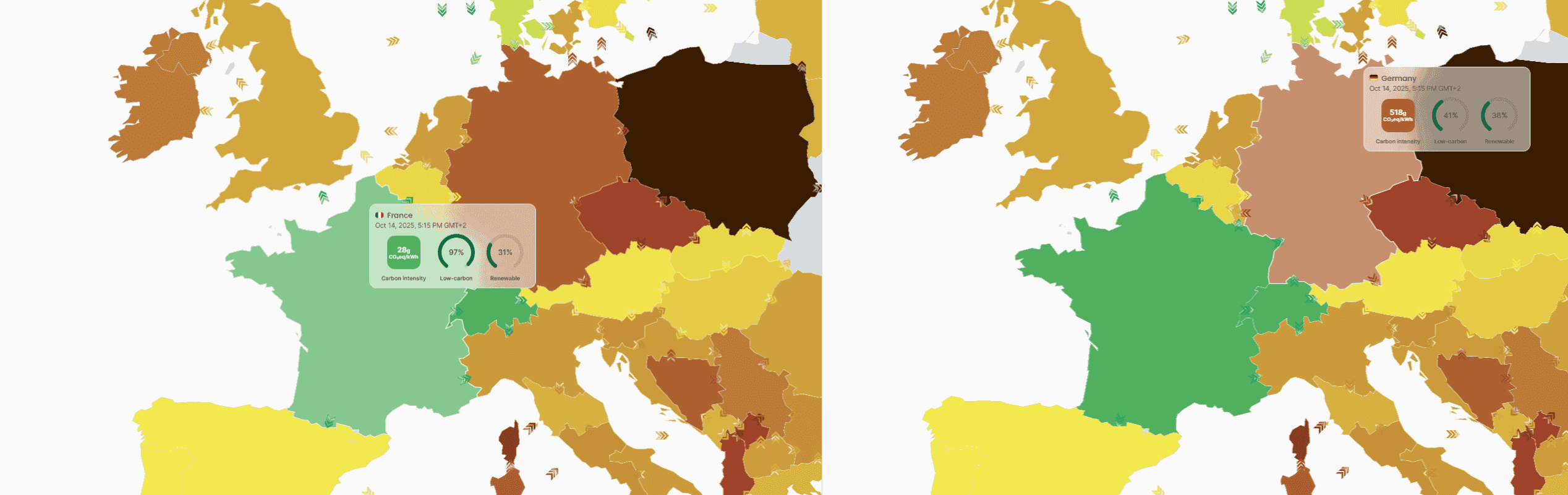

The difference in carbon intensity and electricity prices between grids, even located in the same region, can be very large at times. France and Germany have very different grid carbon intensities. But, at times, the price differences between the two grids can also get very large, as was the case several times in October this year.

In the example below, no matter which real-time signal you use to choose in optimising a workload (digital, or otherwise electricity-intense), you will opt for running it in France, with one exception: if you were to choose to run the workload at noon on the 3rd of October, and use the price signal as the optimisation parameters, you would choose Germany, with up to a 50% price discount when compared to France. You would also increase the carbon emissions of your workload by about 400%, demonstrating how some of the time, a lower price does not correlate with fewer emissions, which is important to keep in mind.

Note: Location-based flexibility with regard to price will depend on (1) your ability to move workloads. For IT jobs in distributed data centers, this is relatively easy, albeit locked on the cloud premises’ locations; and (2) on your price being both dynamic, and following the day-ahead price in a predictable way.

(Two graphs comparing the French and German grids in October 2025. For the entire duration, the carbon intensity is much lower in France. The price is also lower for almost the entire time. At some point, the price electricity of electricity in Germany drops below that of France, potentially saving 50% of the cost. The Carbon intensity, however, is about 4-5x in Germany, compared to France)

We highlighted how historical data could serve as a basis for decision-making, though with limitations, and how real-time data could alleviate some of these limitations when it comes to the location of the emissions.

Let’s now go to the next dimension relevant to flexibility:

Time flexibility

In the examples above, we talked about shifting loads based on the real-time view of electricity signals.

In reality, workloads do not run instantaneously. Big jobs, like for AI training, and even running database updates, machine learning algorithms, any sort of big data manipulation, will need to run for hours, sometimes days.

This introduces the problem of having to know how those signals will evolve into the future: If I can start a workload now, and it needs to run for the next 6 hours, what will the different signals look hour by hour, or even in 5-minute intervals?

What if we wanted to expand that timeline and instead of starting a job now, we wanted to schedule workloads into the future? This is where forecasting comes in. Forecasted data 72 hours into the future, on any signal, is available through Electricity Maps. Various providers offer different types of forecasts. At Electricity Maps, we’re forecasting all of our signals for the next 72 hours, in hourly and sub-hourly increments,

Our customers are using it for EV smart charging, scheduling updates, running cloud workloads at times of cheapest, or greenest electricity consumption.

Below, we’ll go through a couple of examples:

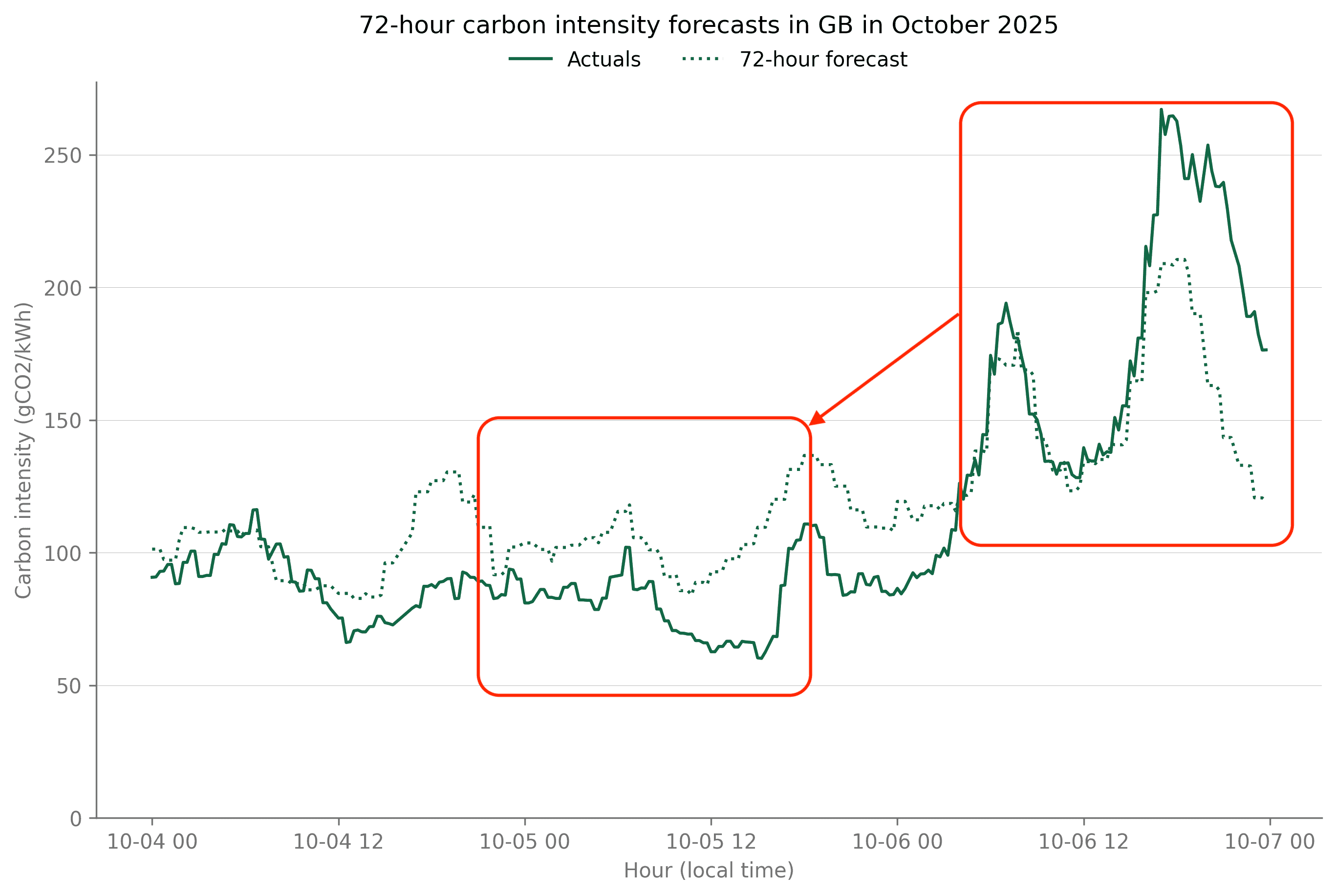

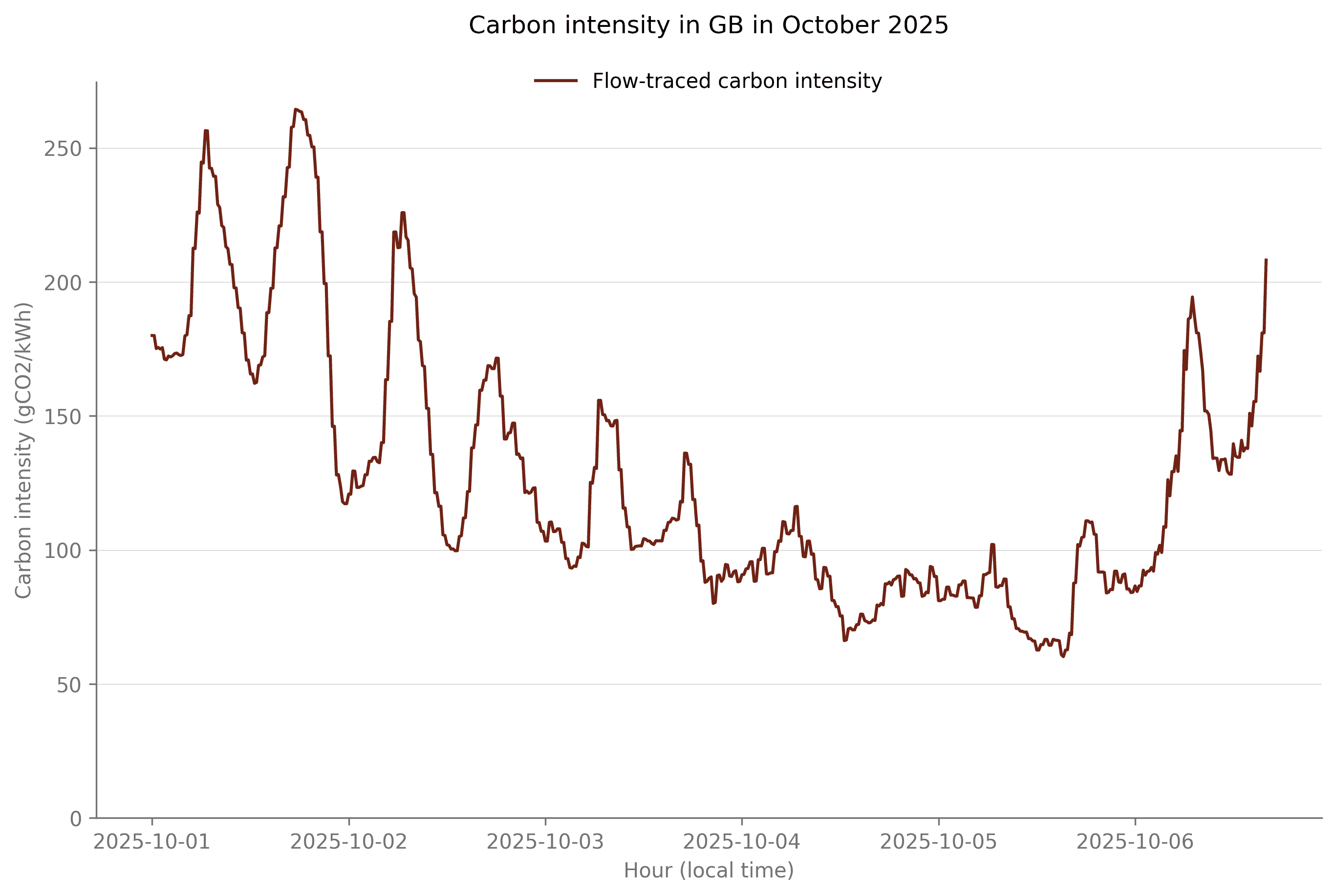

The benefits of forecast data

Let’s take a recent example to understand the benefits of forecast data. In the first week of October 2025, Storm Amy hit Europe, and thanks to significant wind speeds, several European grids broke records of renewable energy generation. However, the very low wind speeds in the second week of October led to spikes in electricity carbon intensity as well as electricity prices. In such a situation, 72-hour forecasts of electricity prices and carbon intensity enable shifting IT loads to times of lower cost and emissions.

(Forecasted and Actuals carbon intensity forecasts in GB, October 2025. There is a big increase towards the end of the window, and we could save costs by shifting the workload to an earlier time)

With Electricity Maps forecasts, the spikes in carbon intensity on the 6th of October could have been predicted three days in advance, and as a consequence, flexible loads could have been shifted to different days, or at least to a different time of the day. Shifting workloads could have cut emissions by half in this example. The ability to shift loads between hours or days would obviously depend on the specific requirements of each workload and what flexibility each has, both in time and space, which leads us to the benefits of automation.

The benefits of automations

Automated load-shifting removes the constraint of individuals having to make decisions whether on historical, real-time, or forecasted data, and depending on the constraints each workload has. It’s what enables a seamless integration of load shifting into your workflows that, at the same time, maximizes emissions and cost reduction potentials while minimizing the impact on your team’s and clients’ work.

Each workload will likely have a set of constraints regarding where and when it should run, as well as characteristics such as its duration. The goal is to seamlessly optimize the scheduling of each workload to achieve a desired outcome. This outcome could, for example, be to minimize the electricity cost (by running at times of low day-ahead price), to minimize the electricity emissions (by running at times of low carbon intensity), or to help grid balancing (by running at times of low net load). This can be implemented with the help of the carbon-aware SDK from GSF or with the carbon-aware optimizer from Electricity Maps API (1), where users can choose duration, start and end times, available locations, and optimization metric to obtain the optimal time and location where to run the workload.

(Electricity Maps' Carbon Aware Optimizer end point, along with a sample request and response)

The untapped potential of load-shifting

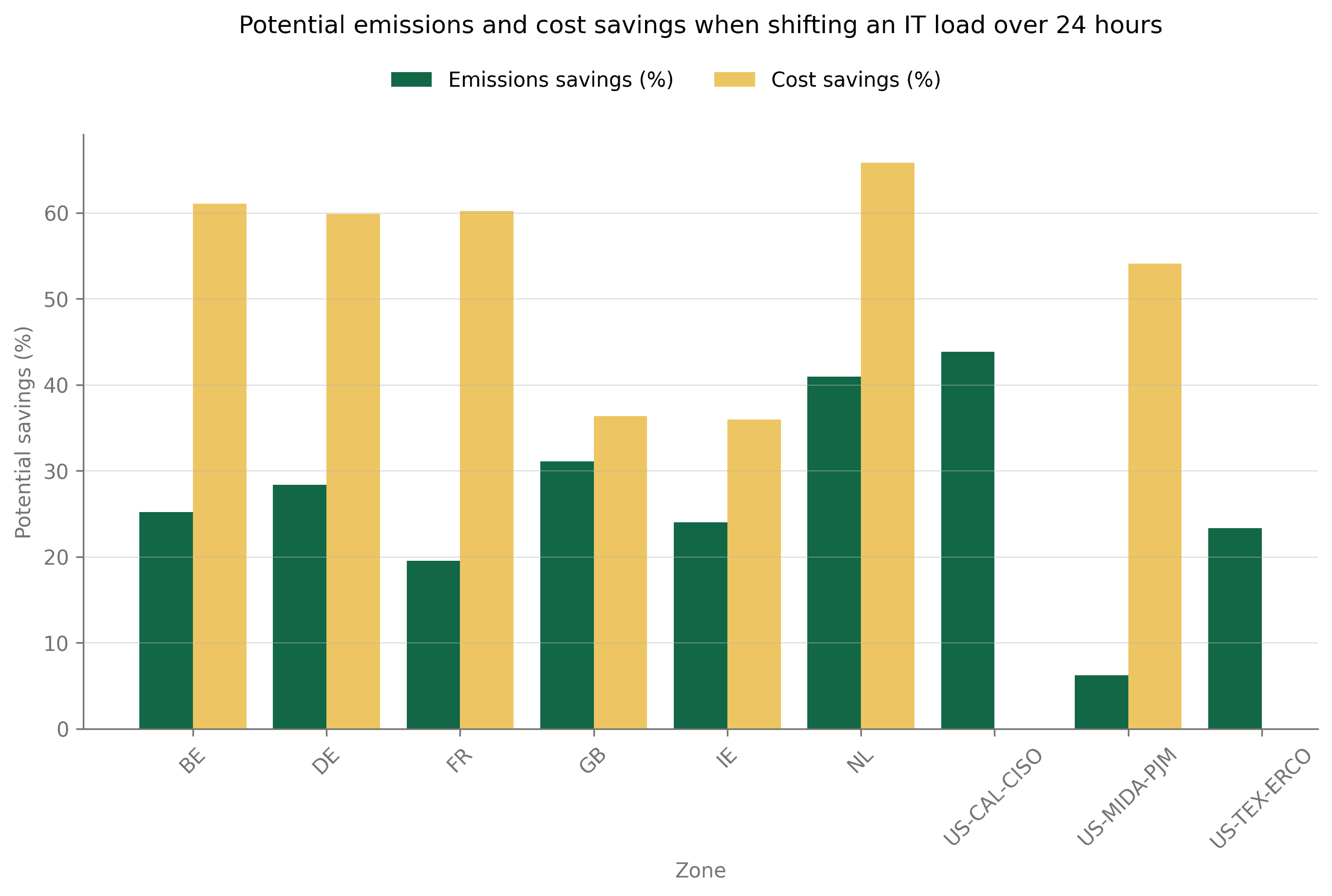

The potential of load-shifting to reduce IT emissions and costs is huge! As an estimate of what savings can be achieved with load-shifting in time, we present below the average emissions and cost reduction of shifting flexible loads over a period of 24 hours in several grids worldwide.

The potential savings were computed by calculating the average emissions and cost reductions of moving workloads over a 24-hour window for each hour of 2024. Price data was not available for US-CAL-CISO and US-TEX-ERCO. Moving workloads across a 24-hour window usually reduces their emissions by 30% and their cost by 50%.

Not all loads are flexible in time and can be shifted to different hours. But shifting those that can be will significantly reduce your IT cost and carbon footprint. IT workloads are also more inclined to be flexible in space rather than time. And here benefits can be huge as well. Carbon intensity and electricity prices can easily vary by a factor of 10 in neighboring countries or grids.

Set the best practices with automated load-shifting

Companies and working groups at the forefront of sustainability in IT are already going beyond the first steps of a Sustainable IT Monitoring journey. They are implementing space and time load shifting to leverage the full potential of emissions and cost reductions.

A few examples from our customers:

Google is running load shifting of their data centers based on Electricity Maps forecasts of the carbon intensity for the next 48 hours (2).

The Green Software Foundation released a carbon-aware SDK (3) that enables creating carbon-aware applications based on Electricity Maps forecasts. Microsoft leveraged the SDK to develop time and space load-shifting solutions for Vestas (4).

Monta offers a Smart Charging feature to hundreds of thousands of EVs (5).

What you will learn in next week’s lesson

This week marks the last deep dive into the steps of the Sustainable IT Journey. The sixth and last lesson, next week, will provide a summary of all the things we learnt throughout this course, as well as key takeaways.

Please submit any feedback you have about the course to help us improve. Also, take it as an occasion to submit any questions you may have on any topic we covered in this course since the first week, and we will answer them as part of the last lesson next week: 1-min survey

References:

4. Deep dive on measuring IT emissions with more granularity

Recap of previous lessons

In the first two lessons, we introduced the concept of Sustainable IT Monitoring, some basics of the electricity grids, and relevant grid signals. We also discussed key considerations when selecting them and challenges associated with sourcing them. In the last lesson, we introduced the Sustainable IT Monitoring journey. It begins with measuring IT emissions with higher granularity, continues with the addition of further insights, and then leverages real-time data. Automated load-shifting represents the last step of this journey.

Access last week’s lesson here for more details on the different steps of a Sustainable IT Monitoring journey.

Today, we will take a deep dive into the first two steps of this journey: measuring IT emissions with higher granularity and adding more grid signals to derive further insights.

In Today’s lesson

The start of your Sustainable IT Monitoring journey

The first steps of Sustainable IT Monitoring build the knowledge foundation that serves as for all the next steps. These steps are not only crucial to better understand your IT usage, emissions, or costs, but also prepare your ability to manage and reduce them down the line.

The starting point is to gain visibility, and there are two ways to explore: increase the granularity of the signals you use and measurements you make, or increase the number of signals and data you keep track of. We will dive deeper into each of these in the sections below. Increasing the granularity not only brings challenges for gathering granular grid data but also challenges for gathering granular usage data. The former has already been covered in the first lessons of this course, and the latter will be explored further in the coming section.

Measure emissions with higher granularity

Measuring emissions with higher granularity is challenging in numerous ways. First, IT practitioners must know the right methodology to account for IT emissions. Once the methodology is known, we have to tackle the challenge of gathering the necessary data. This includes granular grid data, but first and foremost, granular usage data. The following three sections provide more details to help you approach each of these challenges.

Methodologies for accounting emissions

The best place to start, if you are unsure about how to measure emissions with higher granularity, is to review existing guidance and regulations to ensure compliance with them. Regarding what grid data to use, we already covered important regulations in Lesson 2, including the Greenhouse Gas Protocol Scope 2 Guidance (1) and SBTi Corporate Targets (2). Ideally, the same type of guidance would exist for measuring emissions from IT. Unfortunately, that’s not yet the case.

Some industry working groups, though, are emerging to develop guidelines on how to measure and reduce emissions from IT. One of them is the Green Software Foundation (3) which aims to build standards, tooling, and best practices for green software. Among the resources they developed, the Software Carbon Intensity (SCI) Specification indicates how to calculate the rate of carbon emissions for a software system, accounting for both embodied and operational emissions.

The Green Software Foundation is working on adapting the SCI specification for specific use cases such as AI or web applications. They are also progressing on methodologies for calculating the emissions of hardware (4).

(Formula detailing the SCI Specification from Green Software Foundation)

Gather Usage Data

Energy usage data is the cornerstone of all methodologies for assessing IT-related emissions. Yet obtaining it can often be one of the most challenging steps. This section provides practical guidance on how to collect, compare, and estimate this data effectively.

Provider Tools and Methodological Differences

Many IT service providers now include energy and emissions information as part of their offerings. However, these data sets vary in detail, scope, and methodology, making comparison difficult across providers.

Google Cloud and AWS both support carbon footprint features: Google Cloud’s Carbon Footprint (5) and AWS Customer Carbon Footprint Tool (6). However, their respective methodologies can significantly differ. Since this summer, the AWS tool now includes location-based emissions for the energy usage, instead of market-based emissions only. The market-based approach allows companies to account for renewable energy certificates, often used to claim 100% renewable energy. In that sense, it does not reflect the electricity actually consumed from the grid and the associated carbon emissions. The location-based approach accounts for emissions of electricity directly consumed from the local grid and provides a deeper understanding of what electricity sources are consumed and how to reduce electricity emissions. Even though both tools now provide location-based emissions, which allow a more meaningful comparison, their methodologies are still not aligned. Google Cloud uses hourly emissions factors while AWS uses yearly emissions factors.

The lack of consistency in methodology between providers makes it challenging for organizations to harmonize their emissions accounting. Understanding these methodological differences is therefore essential before aggregating or comparing data from multiple sources.

Open Source and Vendor-Agnostic Tools

To overcome inconsistencies between providers, open-source and vendor-agnostic tools can be valuable alternatives. These solutions help organizations gather and compare energy usage data in a standardized manner, regardless of cloud or infrastructure provider.

Following the above example of cloud emissions, Cloud Carbon Footprint (7) is one such tool that enables monitoring and reduction of emissions across major cloud platforms, including AWS, Google Cloud, and Microsoft Azure. It provides a unified view of energy consumption across providers.

Another helpful tool is Kepler (8) (Kubernetes Efficient Power Level Exporter), which measures energy consumption at the container, pod, virtual machine, and process levels. It gathers data directly from hardware sensors and attributes energy use according to resource utilization, offering a deep understanding of workloads’ energy consumption.

The Boavizta (9) is another important initiative. It brings together organizations working on methods and open resources to evaluate the environmental impact of digital activities. Its datasets and tools, available under free licenses, support energy measurement and emissions estimation across a variety of IT contexts.

Using these open and collaborative resources allows organizations to maintain transparency and enhance comparability, independent of specific vendors.

Estimating Energy Use with Load Profiles

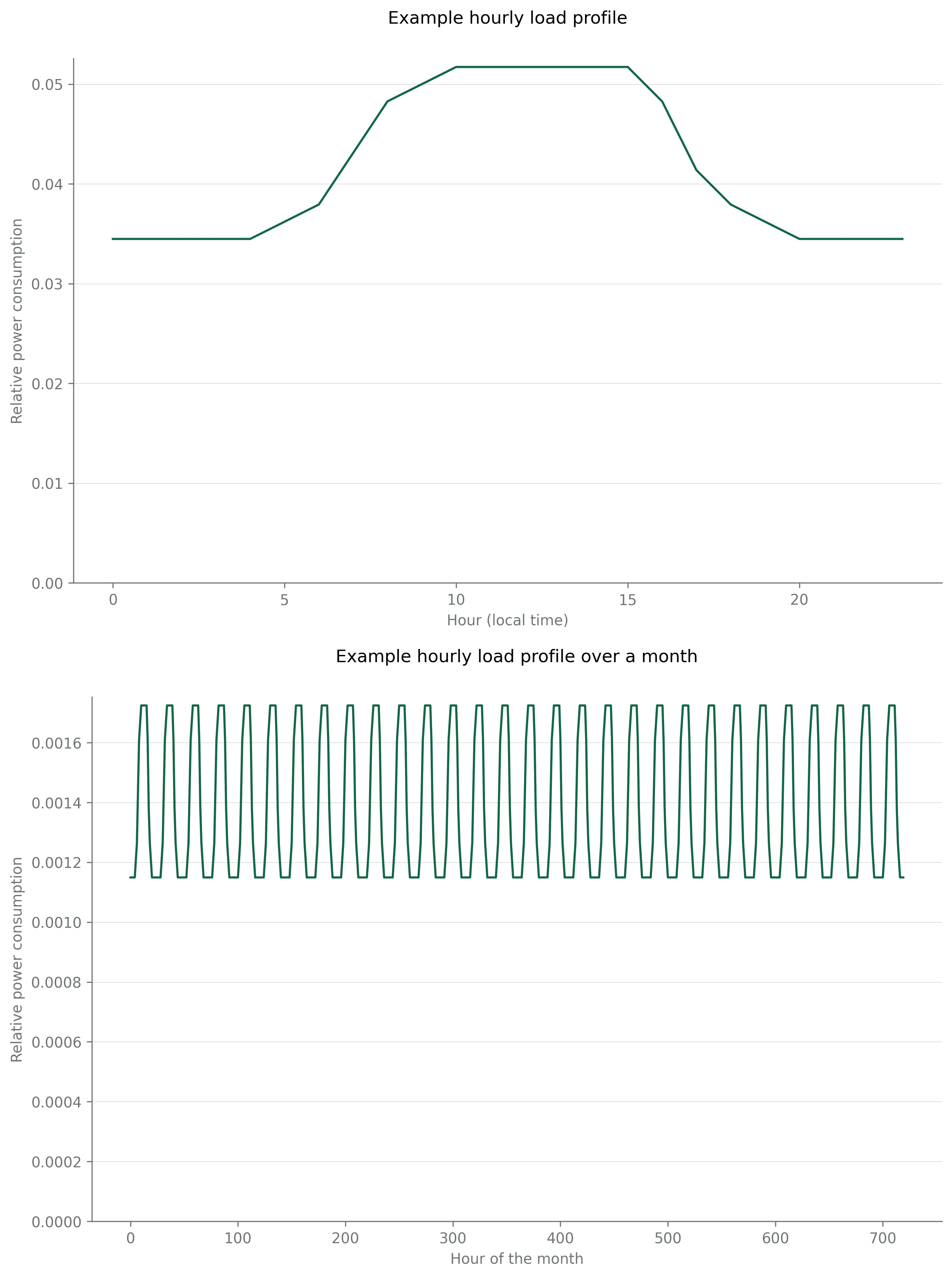

In some cases, detailed or granular energy usage data may not be directly available. In such situations, estimations based on load profiles can serve as a practical alternative. A load profile represents how electricity consumption changes over time—for instance, between day and night, or between weekdays and weekends.

Consider an example where the monthly electricity consumption of an IT system is known to be 50 MWh, but hourly data is not available. If we know that the system load is roughly 50% higher* during daytime compared to nighttime, we can construct a load profile that reflects this pattern. This indicative load profile can also be repeated over 30 days to represent a full month. This yields the load profiles in the figures below. Refer to the end of this page for more details about how we built them.

*Please note that the 50% figure is indicative, and this figure depends on the type of equipment we're measuring, and a lot of different other variables within the same type of IT infrastructure. Try to find a realistic number for your equipment from any available meters or internal specialists to your organization.

Two graphs one after the other: First, a charted daily load profile where the peak is 50% higher than the trough. The second image shows the same profile over 30 days.

Now, if we multiply the load profile by the monthly total consumption - 50 MWh in our case here - we get the hourly electricity load over the entire month. While this method offers only an approximation, it gives a much more realistic representation of actual electricity load than a single aggregate figure used without any specific profile. Over time, the load profile can be refined further to include seasonal variations, differences between weekdays and weekends, or other known consumption behaviors specific to your organization.

(Graph comparing a static average profile with a constructured profile, and what the actual profile might look like.)

Gathering reliable energy usage data requires navigating differing methodologies among providers, complementing these with open-source measurement tools, and developing estimation techniques when direct data is unavailable. Combining these approaches allows organizations to build a more complete and actionable understanding of their IT energy footprint. This understanding forms the foundation for effective monitoring and meaningful reduction of emissions over time.

Gather granular grid data

Once gathered at a granular level, energy usage data must be combined with granular grid data to derive as many insights as possible from historical IT usage. Lessons 2 and 3 already covered at large the importance of granularity for grid data, and we refer to these lessons for more details.

Add more signals to derive further insights

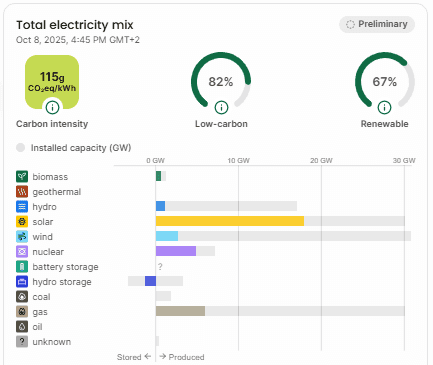

Lessons 1 and 2 introduced several grid signals that exist besides the flow-traced carbon intensity, such as market electricity prices, electricity load (also called electricity demand), the flow-traced electricity mix, or flow-traced renewable energy and carbon-free energy percentages.

Explore on your own!

As a refresher, you can explore these different signals on our live and free Map. You can click on layers at the bottom right of the screen to switch between electricity signals and visualize the map according to the signal of your choice. The left panel that opens up when clicking on a country also includes graphs of all signals.

Our Live Map

All these signals can prove relevant in your Sustainable IT Monitoring journey to gain further insights about:

Why emissions are increasing/decreasing, and how to better manage these fluctuations

Where to simultaneously leverage costs and emissions reductions

How to support the grid balancing while optimizing your operations

These signals are significantly correlated with each other while at the same time telling different stories. This means times of low emissions will often mean times of low prices, but that is not always the case. Also, this depends on one grid to another. Because these signals are (imperfectly) correlated, there is a significant potential for identifying simultaneous costs and emissions reductions while helping with grid balancing by leveraging all available grid signals.

Another reason for leveraging more signals is to create a better understanding of the grid’s behaviour, and thus be more prepared to anticipate future fluctuations. Electricity mix data is key to explaining the fluctuations of grid dispatch and how this translates into carbon intensity variations. By creating a more solid understanding of these dynamics, your customers and your teams can create best practices that lead to emissions and cost savings.

For example, in California, carbon intensity tends to be lower during the middle of the day with significant solar generation. Running workloads in the middle of the day, when solar generation is high, in California, is thus a good practice. In Ontario, carbon intensity tends to be lower at night with lower gas generation and a clean baseload of nuclear and hydro. Running workloads at night, when gas generation is low, in Ontario, is thus a good practice.

(Electricity Mix and Carbon Intensity in California, and Ontario, comparatively)

Adding granularity is not the only way to improve the visibility of IT emissions; it can also grow by sourcing further insights from the addition of new signals. This enables a more holistic approach and understanding of the IT usage, which increases the awareness gained and the potential for creating best practices to reduce costs and emissions.

Additional details on the load profiles

In this course, we introduced load profiles as a tool to estimate energy usage with greater precision when only aggregated numbers - such as monthly consumption - are available. We provide more details in this section on how we built the one used in our example.

In this example, we want to reflect an IT load that is roughly 50% higher during daytime compared to nighttime and build profiles for one day and for a full month. We start with a base value of 100, which will be the electricity consumption at night. We know that electricity consumption will be at 150 during the day (as per our initial assumption). We assume that the system will take 6 hours to ramp up to its full load, and 5 hours to ramp down in the evening, and we fill intermediate values during these hours, which leads to the following values (column Load step 1):

Hour | Load Step 1 | Load profile |

0 | 100 | 0.0345 |

1 | 100 | 0.0345 |

2 | 100 | 0.0345 |

3 | 100 | 0.0345 |

4 | 100 | 0.0345 |

5 | 105 | 0.0362 |

6 | 110 | 0.0379 |

7 | 125 | 0.0431 |

8 | 140 | 0.0483 |

9 | 145 | 0.0500 |

10 | 150 | 0.0517 |

11 | 150 | 0.0517 |

12 | 150 | 0.0517 |

13 | 150 | 0.0517 |

14 | 150 | 0.0517 |

15 | 150 | 0.0517 |

16 | 140 | 0.0483 |

17 | 120 | 0.0414 |

18 | 110 | 0.0379 |

19 | 105 | 0.0362 |

20 | 100 | 0.0345 |

21 | 100 | 0.0345 |

22 | 100 | 0.0345 |

23 | 100 | 0.0345 |

What you will learn in next week’s lesson

We introduced in Lesson 3 the maturity journey of Sustainable IT Monitoring. It starts with measuring IT emissions with higher granularity, continues with adding further insights, and then leverages real-time data. Automated load-shifting represents the last step of this journey.

Today, we covered the first two steps of this maturity journey. In next week’s lesson, we will dive into how leveraging real-time data provides additional benefits in terms of emissions and cost reductions.

We've left some references below 👇 And if you found this useful (or not, but read it anyway), please spare a minute to help us improve the course going forward: 1-min survey

References:

3. The Sustainable IT Monitoring journey: what does it look like?

Recap of previous lessons

In the first week’s lesson, we introduced the concept of Sustainable IT Monitoring and its importance. We also introduced some basics of the electricity grids and relevant grid signals. In the second week’s lesson, we covered the key considerations when selecting grid signals, including granularity, flow-traced data, compliance with regulations, and auditability. We also introduced where to source this data from and the challenges associated with it.

Access last week’s lesson here for more details.

In Today’s lesson

Today, we will introduce in more detail what steps can be taken in the implementation of Sustainable IT Monitoring and map these steps on a maturity journey. A Sustainable IT Monitoring journey starts with measuring emissions with higher granularity and can go all the way to spatial and temporal load-shifting. This represents a whole maturity journey with different data needs along the way.

Overview of the Sustainable IT Monitoring journey

The Sustainable IT Monitoring journey starts with measuring electricity consumption and emissions, and increasing the granularity, but it does not stop there and goes way beyond. Ultimately, it leads to automating spatial and temporal load-shifting for the reduction of IT costs and emissions. This represents one aspiration of such a journey, but several steps exist, and the following sections will introduce each of them.

Step 1: Measure IT emissions with higher granularity

The first and foremost step on this journey is to start measuring and monitoring consumption and emissions. IT is one of the fastest-growing sources of corporate emissions and costs. However, most organizations don’t have the visibility they need.

Measuring emissions is the first step toward compliance, transparency, and long-term reduction. It increases visibility, ensures compliance with current and future standards, unlocks cost and emissions reduction insights, and increases customers' trust.

The extent and accuracy of insights extracted are also a function of the granularity of the data used. Switching from a yearly to a quarterly emission factor, for example, already unlocks value by identifying seasonal trends. Increasing granularity up to hourly factors unlocks the full value in monitoring. It enables identifying which hours and locations are responsible for the most emissions, and unlocks a better understanding of emissions’ origin. This represents the first step in better managing emissions and costs.

The challenge of gathering granular data does not only apply to grid data but also to your consumption data, such as your IT energy usage. We will provide some resources to help you navigate through these challenges as part of next week’s lesson, as we take a deep dive into this step of the maturity journey.

Step 2: Include further insights about electricity consumption

Measuring emissions is the first step, and increasing granularity is the way to maximize insights unlocked, paving the way for emissions reduction. However, as highlighted during the first lessons, many more signals can provide insights about the electricity consumed beyond flow-traced carbon intensity. Some of these signals are: the renewable energy and carbon-free energy percentage, the electricity mix, and the electricity prices.

Companies are more and more committed to goals of matching their electricity consumption with renewable or carbon-free (including nuclear) electricity. The renewable percentage and carbon-free percentage of electricity available on the grid fluctuate in space and time, and so does the percentage of these sources in IT electricity consumption.

Fluctuations of the flow-traced carbon intensity might seem obscure for decision-makers. Providing information on the full electricity mix enables understanding of what drives these fluctuations, what makes the specifics of different grids, and where opportunities lie. It unlocks more strategic decision-making.

Electricity prices might correlate with electricity carbon intensity, but not perfectly. Times of low emissions do not necessarily mean times of low prices, and inversely. Measuring IT emissions enables cost reductions in parallel with emissions reductions, but measuring both indicators leads to better management.

This image illustrates that there is a difference between "Renewable" and "Low-carbon"

This image shows the carbon intensity and electricity prices for the same time period, showing how the two are not always aligned.

Step 3: Leverage real-time insights about sources, prices, and emissions

The Sustainable IT Monitoring journey naturally starts with historical reports and dashboards presenting end-of-month statistics. If these represent a first step in the journey, enabling a better understanding of the current situation and identifying where opportunities lie as well as where management is most needed, moving closer to real-time represents a necessary third step in this maturity journey.

With real-time data, your team and customers can start taking action by identifying times of clean and cheap electricity and times of highly carbon-intensive electricity. Real-time data empowers decision-making on whether now is a good time to run compute or whether flexible jobs should be postponed. It also enables live decision-making leveraging spatial flexibility, such as deciding on where to run a workload based on the current carbon intensity of different grids with available compute resources.

If given access to live carbon intensity data, an engineer could have identified on the 3rd of October in the morning that now was a good time to run a workload and leverage clean electricity. The same engineer would have also realized a couple of days later, on the 6th of October in the afternoon, that it would be better to postpone any non-critical jobs to avoid consuming carbon-intensive electricity.

The GB grid 1-3 October, showing low carbon intensity for the night between 2nd and 3rd:

The GB grid 1-6 October, showing high carbon intensity on the 6th of October, compared to the days prior:

In another example, with two data centers available in France and Germany now, by leveraging real-time data, an engineer could make the decision of running a workload in France to reduce its carbon impact.

Step 4: Spatial and temporal load-shifting

We’ve highlighted in the above section how real-time data brings new and actionable insights for decision-making informed by how clean the electricity grid is. However, several limitations arise, such as: “How long will I need to postpone my workload for? Is the carbon intensity of the grid gonna get any better in the next 24 hours?” or “If I have the flexibility of running workloads during the night, how do I make sure they run at the cleanest and cheapest times?”

This introduces the last and most advanced step of the maturity journey: implementing automated spatial and temporal load-shifting. This can even be considered as moving beyond the Sustainable IT Monitoring solution and leveraging flexibility in load. With predictions of grid signals across multiple regions and days, IT practitioners can leverage the flexibility of workloads to reduce costs, emissions, and even help with grid balancing. This will be the topic of our last lesson in this course.

Below, we're showcasing how our internal tool can identify the greenest time and place for a 2-hour compute job, when looking to schedule it in the next 36 hours.

(Image showing our internal tool. This example is using a 36-hour horizon to find the optimal location for a 2-hour compute job. We are looking at 3 GCP locations in Europe, and their respective carbon intensity, forecasted for 36h.)

What you will learn in next week’s lesson

We’ve introduced today what the different steps are on a journey through Sustainable IT Monitoring, starting with the basics of measuring emissions, towards leveraging flexibility in IT loads, which even goes beyond the monitoring aspect. In the coming weeks, we will conduct deep dives into each of these steps, starting with steps 1 and 2 next week.

If you found this lesson useful (or not, but read it anyway), please spare a minute to help us improve the course going forward: 1-min survey